Given the public’s expectation for disclosure, it’s refreshing to see the Federal Motor Carrier Safety Administration take the position that some of the information it now disseminates isn’t fit for public consumption. The action is long overdue, but it’s welcome.

By summer’s end, the agency plans to remove carriers’ accident safety evaluation area (SEA) scores and overall SafeStat scores – based in part on accident SEA scores – from its public websites. FMCSA uses SafeStat to target its oversight toward high-risk carriers. Federal and state law enforcement officials will still have access to those scores, and each carrier will have access to its own scores. FMCSA says the scores will be restored to public websites once it can ensure more reliable crash data.

One of the biggest glitches in SafeStat is the disparity among states in the timeliness, completeness and accuracy of crash data reported to FMCSA. An audit by the Department of Transportation Office of Inspector General showed, for example, that several states had not reported any crashes for the six-month period analyzed. At least two, Pennsylvania and Florida, may have had hundreds of truck-involved crashes in that time.

To appreciate the scope of this problem, consider how SafeStat works and how geographically unbalanced data quality is today. If the accident SEA were based on a carrier’s accident rate, slow data would just make some carriers look better. But that’s not the methodology in most cases. Rather, carriers are ranked against peers.

Suppose two carriers have the same number and severity of accidents during a given period of time. If one operates only in states where data is complete and timely and the other operates only in states where data quality is poor, the latter will look much better in SafeStat.

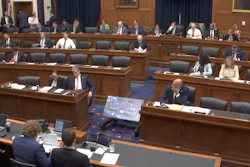

This isn’t a far-fetched scenario. According to a color-coded map FMCSA recently posted at Analysis & Information Online, all but two of the states with poor data quality are east of the Mississippi River. That means that carriers operating primarily in the East will tend to have better accident SEA scores than carriers with similar safety performance operating primarily in the West. When you consider that the accident SEA counts double in the overall SafeStat score, you see how the current situation can be unfair.

FMCSA has taken other steps to promote data fairness, including implementation of DataQs, a system that allows carriers to file concerns about data that FMCSA and states collect. The agency also is requiring states to describe their data collection and improvement strategies in all enforcement funding applications.

These efforts won’t fix all of SafeStat’s problems. A big issue is poor normalizing data, such as the number of power units and drivers, used in some SafeStat calculations. Here the blame falls on carriers themselves. By failing to update their Form MCS-150s periodically, carriers may look smaller than they are, skewing the calculations against them. Some unscrupulous carriers may even use the MCS-150 in the reverse way to make themselves look better. Although FMCSA now requires Form MCS-150 updates every two years, the agency hasn’t yet gotten tough on deadlines or verification. The agency needs either an independent source of normalizing data or a quick, simple means of auditing MCS-150 information.

Another question is whether FMCSA is using the correct normalizing data. Many carriers contend that vehicle miles traveled is a more accurate measure of highway risk exposure than the number of power units. That’s a good point, but factors such as traffic congestion and climate, among others, mean all miles aren’t equal.

SafeStat will never satisfy everyone. You can, however, demand consistency, timeliness, accuracy and completeness. It’s only fair.